The Fake Fire Brigade Revisited #3 - The Biggest Part of Business As Usual - Electricity

Posted by nate hagens on September 5, 2010 - 10:45am

Below the fold is the 3rd in a series of follow up posts providing analysis on the difficulties of maintaining our current energy paradigm with renewable energy (generally, 'the fake fire brigade'). The main authors are Hannes Kunz, President of Institute for Integrated Economic Research (IIER) and Stephen Balogh, a PhD student at SUNY-ESF and Senior Research Associate at IIER. IIER is a non-profit organization that integrates research from the financial/economic system, energy and natural resources, and human behavior with an objective of developing/initiating strategies that result in more benign trajectories after global growth ends. The authors have written an extensive follow-up to the questions raised in the original posting and I've broken into 5 pieces for readability - the 3nd installment, with a focus on electricity generation in an energy transition, is below the fold. This installment has been delayed a few weeks due to Hannes taking time off to get married....

The Biggest Part of Business As Usual - Electricity

In this third installment in this series, we want to put some emphasis on one of the most important enablers of human civilization of the 20th century: electricity. Its ubiquitous availability from every power plug is something we take for granted, despite the fact that stable electricity production is probably one of the most complex continuous endeavors of mankind, and one where many poorer countries fail.

In this post we would like to provide an overview of some of the properties of electricity, describe its nature (as a flow based system), and explain what challenges it faces in the future – especially those related to maintaining current delivery patterns once we have to increasingly rely on inputs no longer coming from fossil fuels that can be stored and burned mostly at our discretion, but from increasingly stochastic, largely uncorrelated flows such as solar or wind.

Electricity is a core topic of IIER’s research, because for us, maintaining anything that more or less resembles our current advanced economies is synonymous with uninterrupted, reliable electricity which mostly comes as a discretionary service to the user. Users, in this case, aren’t just private consumers, but also industrial and commercial applications, which are part of any advanced society.

Electric power is also the area of greatest debate, greatest hope and greatest investment, and the area where IIER thinks that societies face challenges with all their current attempts. Presently, OECD countries are targeting electricity generation as a means to meet carbon emission reduction goals, while simultaneously encouraging the development of non-fossil fuel based transportation (e.g. electric vehicles) and other moves away from coal and oil in industrial applications. They do this – so we think – without a robust plan as to how to maintain today’s delivery security. All plans aim at combining wind, solar, geothermal, and nuclear, super- and smart grids into one new robust delivery system, and there seems to be general agreement that this will actually work. But after thorough and unbiased research of the characteristics of electricity delivery systems, the parameters of those new technologies and the discrepancies between assumptions and reality, we are now skeptical as to whether societies will be able to provide stable electricity at acceptable prices going forward. We realize that this statement is almost considered a sacrilege.

Below, we will try to explain our concerns step by step, and why we fear that investing hundreds of billions in an electricity system that is far more complex and far less reliable will lead us in the wrong direction, given the details of our current situation. Once again, a clarification: we are not arguing the fact that we slowly have to move away from fossil fuels and start using more renewable sources to provide our energy needs. However, we disagree with the common notion that societies can make this renewable energy transition and still receive the same services as today: stable and affordable electricity not just for private consumption, but for all uses that are part of an advanced industrialized society.

IIER’s Electricity Availability Index

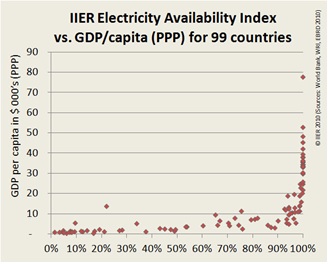

In our first post, we introduced IIER’s Electricity Availability Index. It measures the availability of electricity in a country based on penetration (% of population with electricity) and reliability (outages and duration of outages per average customer).

Figure 1 – IIER Electricity availability index

Some commenters questioned the relationship between electricity and wealth (measured in purchasing-power adjusted GDP per capita). Such was the first hypothesis we tested when developing the EAI metric. The chicken-and-egg question can - as we think - be resolved quite easily, by testing in which directions we find the outliers. In case the assumption of “wealth is possible without stable electricity” is correct, there should be countries with low electricity availability that still are quite rich (measured in GDP per capita). However, these do not exist, the “richest” outlier is resource-rich Botswana (diamonds, copper, nickel) with close to $14’000 per capita and an EAI of only 21.9%. On the other hand, we do find rather poor countries with almost 90% electricity availability (such as The Philippines and Mongolia, with a per capita GDP of around $3’500), which leads to the conclusion that the correlation is unidirectional, or in other words: You don't have to be rich to have stable electricity, but your country needs stable electricity to become (or stay) rich.

The benefits of electricity

There are two discrete aspects of electricity’s importance to society: the benefit of its ubiquitous on-demand availability, and the severe side-effects of power interruptions. Let’s look at a simple illustration. Few companies in OECD countries install backup power for desktop computers, despite the risk of data loss during a power outage. The reason is economic – outages are so rare that the possible the cost for buying, maintaining and operating the backup equipment outweighs the risk of outage, which is why only servers and data centers are deemed worthy investments into power backup solutions. In emerging or developing countries, backup systems are commonplace, but only if businesses can afford them. But most local businesses cannot, which makes it primarily an option for international corporations, while local companies are at a disadvantage.

Other applications, particularly of industrial nature, can’t even operate with backups; they simply need a power guarantee. The pots of an aluminum smelter require uninterrupted power 24/7, 365 days a year. If the power is lost for more than a few hours, not only does the process stop, but after a short while the aluminum begins to congeal, with the consequence that the entire pot has to be scrapped, incurring costs of millions of dollars. Or think of a shopping mall that suddenly goes dark. No lights except for emergency lighting, no access to transaction services to process a credit or debit card, no elevators or escalators, and ultimately no sales. There are multiple studies on the cost of “reliability events” in power grids, each reporting very significant losses (a lot of research has been done at Berkeley Lab, documents can be found at: http://certs.lbl.gov/CERTS_P_Reliability.html). So while – as many people correctly say - power outages are just a nuisance to private households as long as they don’t exceed the time a fridge or freezer can hold its temperature, they are a threat to all more complex industrial and commercial activities that make our societies “advanced” and require the humming of electricity-driven machinery almost around the clock.

This now ties back to the Electricity Availability Index – many things are either impossible or economically not feasible in environments where grid stability becomes an issue. And even for applications where it is theoretically possible to ramp them up and down without efficiency or material losses based on energy availability, there are significant social costs associated with unpredictability. If there is no power, should we send all the workers home for a week, and call them again at 1am on the Sunday when supply comes back? We can certainly do this, but in reality we would probably rather cease many of those activities, because the opportunity cost of underutilized equipment and labor becomes so big that the final objective no longer makes economic sense.

What is electricity and how is it delivered

There are two ways that electricity is supplied. In smaller, poorer, or more remote areas, electrical production is achieved by a standalone solution that provides comfort or capabilities to those able to afford it. Often this is provided by diesel generators which can produce electricity as required, or by standalone hydro, coal or natural gas power plants which serve a local area or industrial activity. Increasingly, solar panels combined with batteries provide this service, or wind turbines in conjunction with oil based generators. The key characteristic of this type of delivery system usually is very high cost per delivered kWh.

In richer economies or even in urban areas almost all around the world, electricity is delivered via a centrally managed grid, which balances inputs and outputs effectively to ensure that demand is always met. In poorer countries, this often does not work out, with the consequence of regular grid breakdowns. In OECD countries, however, we are so used to the grid’s reliability that even small power outages regularly make the news headlines. Below, we will mostly focus on grid based systems, as only those are capable of delivering the basic industrial and commercial services for societies we are used to receiving.

What we get from our power sockets as “electricity” is the product of an electric current that is converted into useful work by an appliance. To make sure that those appliances work, particularly more fragile ones involving electronics, voltage and frequency must be standardized across entire regions (for example 120V/60Hz in Northern America or 230V/50Hz in Europe).

An electricity delivery system can be compared to a complex set of water pipes where water (electricity) enters at multiple points and is withdrawn at hundreds of thousands of faucets. Contrary to a water delivery systems, these electrical ‘pipes and faucets’ are so fragile that they almost immediately burst or collapse when too much or too little water is in the system. Or in other words – electricity is a fully flow based system, where inputs and outputs have to be matched at any point in time with deviations of less than 0.5% between supply and demand (see ENTSO-E manuals for more detail: https://www.entsoe.eu/index.php?id=57, particularly the one on “Emergency Procedures”) .

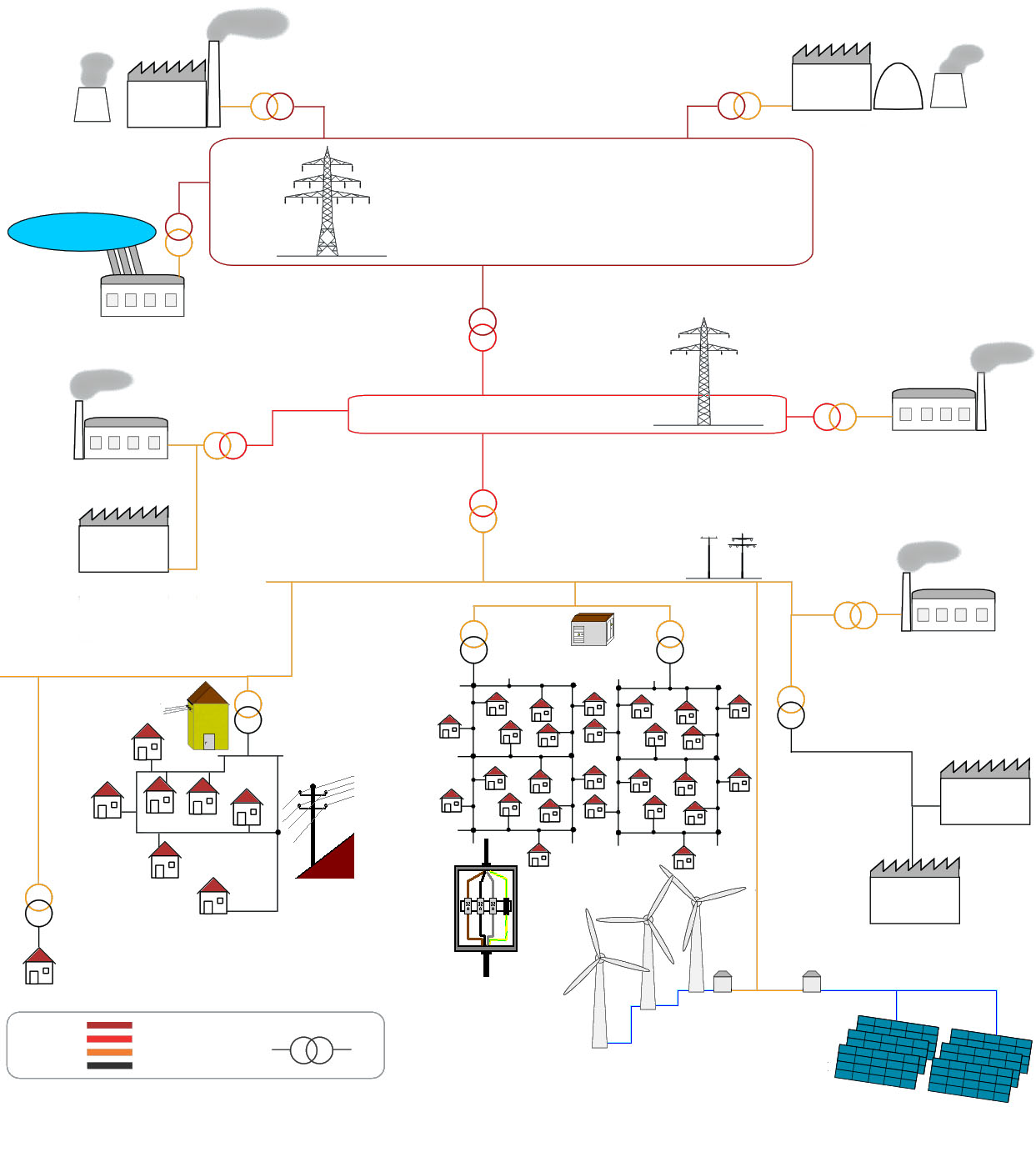

Figure 2: Grid based system (Source)

Currently, this system is fully supply-controlled (i.e. production is following expected and actual demand), which is why it has become so beneficial to society. It delivers seemingly unlimited and unrestricted amounts of energy to each room in our homes, offices and factories, and except for heavy loads in an industry or computing (server farms), there is no user-level planning required before flipping a switch, plugging in a heater, turning on a computer. Electricity just flows according to one’s needs. Later, we will examine demand side flexibility, but first, we want to focus on the supply side, which is where electricity systems are controlled today.

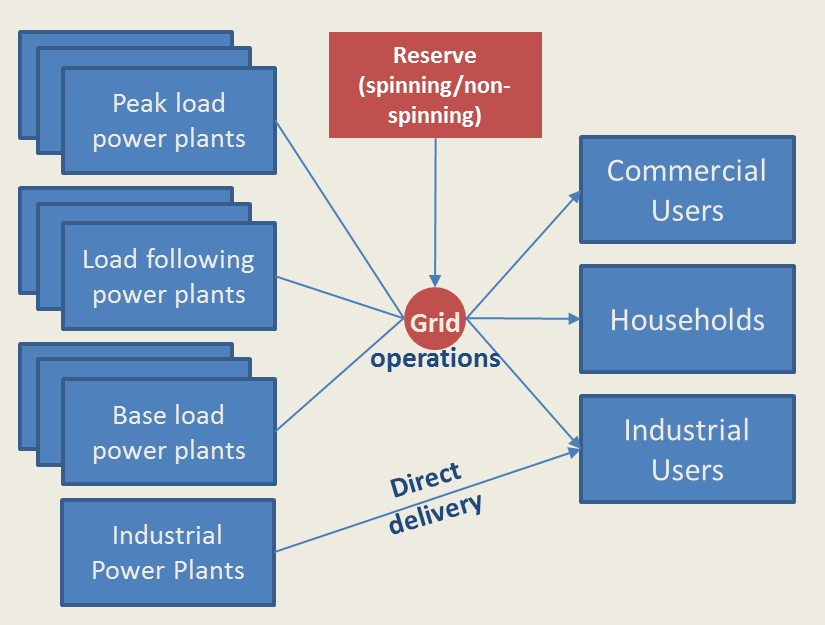

Figure 3 – schematic delivery system (current status)

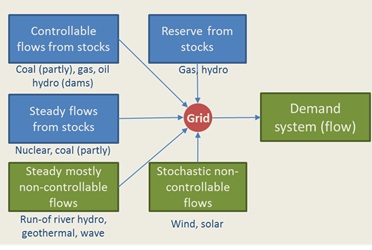

To meet demand, which follows the cycles of human ecosystem patterns (days, nights, work/non-work days, heat, cold) is today matched by a combination of power sources that together form a highly flexible supply system, which also includes reserves to match unexpected demand spikes or sudden supply-side failures, for example when a power plant experiences an emergency shutdown. We will dive into the different load patterns and reserve provisions a little further down, but the key characteristic of a vast majority of inputs today is that they are fully predictable and mostly controllable. This is because inputs come from steady flows (like a running river), but by a large majority from stock based resources that can be consumed whenever there is a need, such as coal, natural gas, stored water or nuclear power (the latter could, for reasons to be discussed further down, also be seen as a steady flow). So in essence, what we have built is a highly complex system that converts steady flows and stocks into a well-managed, demand driven flow of electric current.

Figure 4 – types of inputs into electricity grids

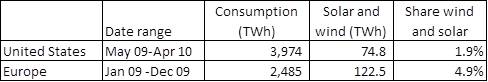

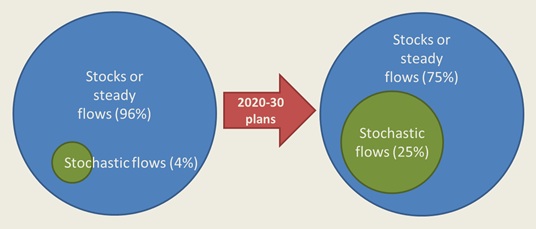

What most OECD countries plan to do is to replace some of those steady flows or stocks on the supply side by adding more and more renewables with erratic flows. Currently, those stochastic, non-controllable flows from solar and wind power account for a maximum of 5% of total power production in each interconnected grid systems we are aware of [see Table 1 for the U.S. (combining Western and Eastern interconnection for lack of data) and for the European interconnected grid system – ENTSO-E], but by 2030, most countries in the Western world plan for 20 or 30% of electricity to be delivered from those two sources alone, accompanied by other new technologies.

Table 1: wind and solar power share in 2009/10 for major grid systems (EIA 2010, ENTSOE 2010)

In Europe, the almost 5 % of solar and wind are very irregularly distributed, with some countries totaling close to 0%, and others already experiencing up to 20% (Denmark) of those renewable sources. All those countries with high shares manage their problems with the significant help of their neighbors. Very small Denmark for example uses the comparably huge water power systems in Norway and Sweden to buffer its heavily variable wind outputs.

This grand plan – to maintain something that already now is highly complex by adding multiple layers of complexity – is something we are very concerned about. The overlying challenge is to keep a flow-based demand system working while stochastic, non-controllable flows gain a significant share of supply, and to do so without jeopardizing grid stability, and at a price which is still affordable. We believe that most people underestimate this challenge and that it actually may be insurmountable. Important: “affordable” in this case doesn’t mean it can be paid by individual households for their relatively small amount of required electricity, as they may be able to bear 20 or 25 cents for a kWh, but instead for an entire industrialized society with the need to provide all the goods and services that make it what is considered “advanced”.

Figure 5 – shift to larger amounts of stochastic flows

What is an acceptable price for electricity?

What a high cost of oil does to societies has been well researched and documented in a number of papers (see: http://www.iiasa.ac.at/Research/ECS/IEW2005/docs/ppt/IEW2005_Maeda.ppt) . High oil prices seem to be a clear inhibitor of economic growth and early indicators of coming recessions. The reason behind this is the fact that the higher the cost for energy is, the less of our efforts can go towards discretionary spending (Hall, Powers and Schoenberg 2008). It is an inherent property of EROI: the energy and money we spend to procure and extract energy, is unavailable to spend on discretionary and non-discretionary investment and consumption.

There is no reason why the situation should be different for energy inputs other than oil, as higher energy costs always leads to this diversion away from consumption and investment. However, creating a benchmark is not easy, as electricity rates have been relatively steady during the times when oil prices fluctuated heavily, which gives us no past reference.

Using oil, where a relatively solid research base exists, we wanted to create a benchmark for “tolerable” electricity prices. Some papers suggest that oil prices that grow from 25 to 35 dollars have a negative impact of 0.3-0.5% on GDP in various countries (http://www.iea.org/papers/2004/high_oil_prices.pdf). We currently are at around $80/barrel, and are still in the middle of a bad crisis, which just looks less bad because governments have started to run up deficits at a breathtaking pace. At $150/barrel, in 2008, the current recession began with a vengeance, and many researchers suggest that high oil prices had their fair share in pricking the problem.

So based on experiences from 2008, we can probably assume that oil prices around $150 per barrel choke many economic activities, as the marginal cost becomes unbearable for many private and commercial consumers alike. Even at the current price of approximately $80/bbl, transportation and other energy-intensive sectors are under heavy pressure, and oil prices push commodity prices up. As a reminder: During the past 50 years, the median price for oil stood at about $25/bbl (inflation adjusted to current dollars). If we look at energy content in a barrel of oil (6.1 GJ or 1700 kWh), a price of $150 translates to a cost per kWh of 8.8 cents, $25 translates to 1.5 cents per kWh in oil.

The difficulty now comes in finding a meaningful comparison between oil and electricity. Oil is a high quality and high density raw energy source with excellent properties with respect to transportation, storage and processing, while electricity provides a distributed service at a comparably high quality. We assume that the same energy content in electricity is of higher value to society when compared to oil, which thus can bear a higher cost for the same amount of energy (this was also part of the Divisia index developed by Cleveland et.al.: http://www.eoearth.org/article/Net_energy_analysis).

One method of comparison would be to compare the ability to convert a specific source to heat (http://www.eia.doe.gov/cneaf/electricity/epa/epat5p4.html). To produce the same amount of useful heat, about three times as much oil is required when compared to electricity. So while the lower limit would ask for a direct 1:1 comparison, a “bonus” factor of three for electricity sets the upper limit. However heat – today – is no longer the key use of oil; heat may be produced with natural gas or coal at much lower cost (at less than a third of that of oil). In the predominant applications for crude oil today, transportation fuels and chemicals, electricity is at a clear disadvantage. We therefore decided to assume a bonus for electricity in the middle of the two possible values at 200%, i.e. we attribute twice as much value to a kWh in electricity when compared to crude oil, and equally, set the threshold for economic trouble at twice that of oil.

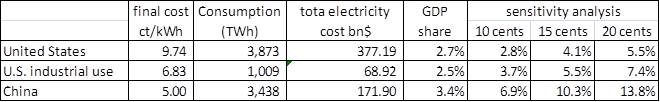

Table 2: relative prices of electricity and oil

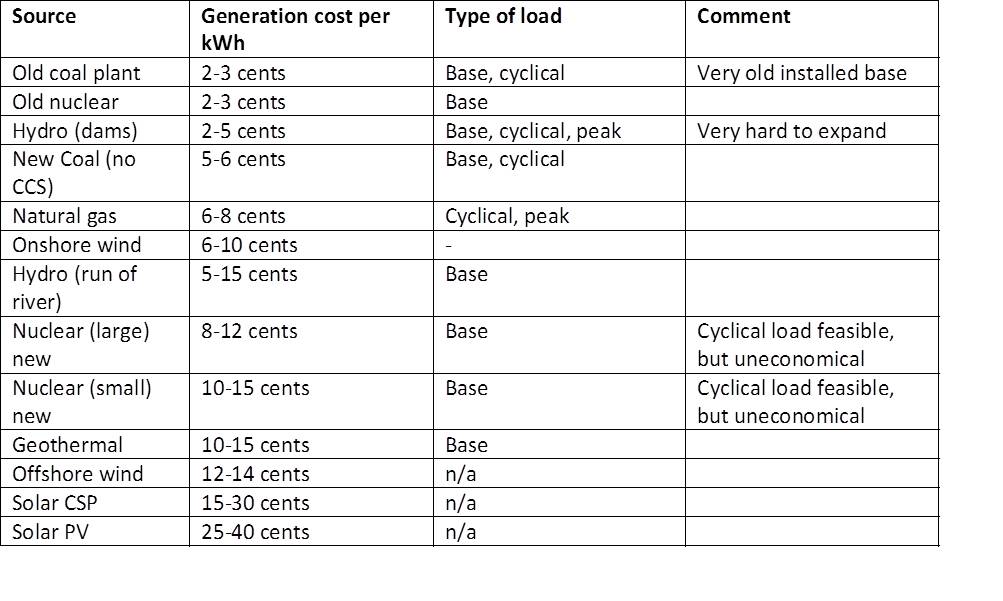

Under such an assumption, we see in Table 2 that electricity prices become critical at around 9 cents per kWh, equivalent to about $70/barrel of oil, and then unbearable at 15-18 cents (equivalent to 130-150$ oil). This is an average value for an entire industrial society, as wealthy private consumers can tolerate rates even higher than 20 cents per kWh.

But unfortunately, a society doesn’t just consist of consumers; it also needs to produce goods and services, and there, a cost of 15-18 cents will definitely be unacceptable. Given that Chinese manufacturers often operate with final electricity cost between 4-5 cents per kWh, even the 2008 average price paid for industrial electricity of 6.83 cents puts domestic U.S. companies at a significant disadvantage. At today’s electricity levels, highly energy-intensive applications are no longer competitive, which is already visible in industrial trends – it is not only labor-intensive work that is going abroad, energy-intensive industries such as aluminum smelting and steel manufacturing are leaving areas with high electricity cost.

Another method available to create a metric for “acceptable” electricity prices is to use the ratio of electricity cost to total GDP. At the average rate of 9.74 cents per kWh of delivered electricity, all electricity consumption costs the United States about 2.6% of U.S. GDP. If we separate out the industrial portion of GDP (2,737bn US$ in 2008), a similar portion (2.5%) is spent on electricity, at the average price of 6.83 cents. Should this price – for example – triple to 20 cents, suddenly 7.4% of total industrial cost would go towards electricity. This is far more than the profit margins of most energy-intensive industries.

For the U.S., where a large portion of heavy industry has been cut back already due to the relatively high cost of labor and energy compared to other places, such an increase may seem bearable. But what if China would operate under the same regime, replacing current low-cost electricity from coal with expensive new sources? In China, electricity alone totals to approximately 3.5% of GDP at an average cost of 5 cents/kWh, quadrupling the cost per kWh to the same 20 cents would demand that the country diverts 13.8% of its GDP to electricity. This is not feasible, as it – together with oil, coal and natural gas, would divert more than 25% of total GDP towards energy alone – representing a society-level EROI of 4:1. One of the reason why China fares so badly here is because the country provides a lot of the cheap energy Western societies no longer have, and then import it embedded in goods.

Table 3 – electricity price sensitivity U.S. and China

If we want to run a complete industrial society, looked at on a global scale, energy prices above certain levels are not sustainable, as they reduce available surpluses for consumption and investment. And unfortunately, those cost levels of 15-20 cents per kWh on average are exactly where societies are headed with the planned changes. We will cover those aspects in more detail further below, when looking at individual technologies.

Meeting demand – in more detail

In order to understand what we need and what we receive from multiple technologies, it seems important to split out the various types of load grid operators have to deal with.

Base load – defined as the long-term minimum demand expected in a region – is usually provided by technologies with relatively low cost, high reliability and limited ability to modulate output. This includes nuclear power plants, lignite coal plants and hydroelectric water mills in rivers. Those plants typically have to operate continuously at relatively stable loads, as otherwise their efficiency is reduced significantly, leading to higher cost per unit of output. Also, re-starting those power plants is relatively time-consuming and inefficient. In most countries, base load capacity is capable of covering approximately 100% of low demand (during nights and weekends).

Intermediate or cyclical load – the foreseeable portion of variety in loads over a day is provided by load-following sources that can modulate to higher or lower output levels – or almost entirely be turned off and on within a relatively short time. However, these sources usually require some lead time to grow or reduce output, for example some coal power plants. Today, natural gas is used for a significant portion of cyclical load.

Peak load – usually required within very short periods of time for a few hours a day – can be provided only from sources that can be turned on and off within minutes, this typically includes gas and small oil power plants as well as stored hydropower (dams or pumped hydro). Peak capacity can be provided by spinning reserve plants (e.g. running plants that can increase capacity quickly) or by non-spinning sources, which can be turned on within minutes.

Beyond technology limitations that make it difficult or uneconomic to ramp capacity up or down quickly, the key factor in the eligibility of a technology for the use in peak, cyclical and base load mode is the cost share between capital investment and fuel cost. The higher the fuel cost share, the more suitable a technology becomes to support peak power; the higher the investment share, the more operational hours are required to arrive at an acceptable average price per kWh. We will look at this issue further below, but this for example is the main reason why nuclear power is such a bad load-following or peak source.

Demand flexibility has a (high) cost

Another point has to do with the flexibility of electricity use, i.e. the possibility of turning something on when supply is abundant, and turning it off when power is scarce. The problem lies with the nature of most uses: many applications are simply inflexible, like those that require something to run for 24 hours a day - data centers are among them, and so are some key industrial processes. Lighting is not flexible, nor is access to heavy uses of electricity in households, such as cooking, using electronics or most kitchen appliances. We also want hot water and cool air when we need it, and usually we don’t want to schedule our laundry because someone tells us to do so, even though this is probably the easiest part. Now some applications, particularly heating (air and water) and cooling (air and goods), indeed have certain flexibility potential. We can run a freezer or air conditioner that produces ice to bridge supply gaps, or we can build a water heater which produces enough hot water to get us through the day, a very common application today in Switzerland, where night energy rates are often half of daytime rates even for households. However, such a time shift comes with tradeoffs: any application that uses storage instead of directly converting electricity into the desired quality output (heat or cold here), ultimately adds cost, for several reasons.

Making equipment flexible comes at a cost, either the cost of information transfer (for price-regulated markets) or the cost of storing the required energy for later use. France has been quite active at experimenting with contracts allowing them to regulate energy according to supply, where customers pay less for power that can be cut off at any point in time. This is especially important in France because of the inflexible nature of their generation technology mix with almost 70% coming from nuclear power. Yet the flexibility French grid operators were able to evoke from that market mechanism, despite the heavy incentives, was around 2-3% of total peak demand (according to RTE, the French grid operator). Most users obviously prefer the inconvenience of higher prices versus the inconvenience of service interruptions, even for things that are not mission-critical. This fact leaves us with approaches that actively shift energy consumption without affecting the end-user. Mostly, this translates to some kind of storage, which has a number of disadvantages.

Every piece of equipment that includes a storage mechanism is significantly more complex than one that operates without, and because of that complexity becomes more expensive, more energy-intensive in its manufacturing, and more exposed to failure. Additionally, each storage process incurs losses. If we produce hot water at night that should last through the entire day, some of the heat dissipates, dependent on how well insulated the storage tank is (again this is dependent on cost and effort, as well as space). The same is true for air-conditioners or freezers that use ice produced at night as buffer – they are less energy efficient overall. Both applications can still be economical for the end user and society as a whole if they use cheap base-load power at night and avoid using peak electricity during the day. Ice-based air-conditioning systems are quite common in office buildings in some parts of the U.S., where utilities charge different rates between night and day. But there is a caveat: all those approaches are geared at balancing two almost steady systems with fully predictable 24 hour cycles, nightly base load production and daily usage patterns with a peak or two. Thus, the maximum storage time required is 10-15 hours, which reduces system complexity as well as conversion and storage losses to acceptable levels. Now with renewable energy supplies, we are suddenly confronted with irregular patterns that can include days to weeks of over- and undersupply. In those cases, storage and conversion losses beyond a few days become almost insurmountable hurdles, as cumulative losses grow quickly over time.

So in a nutshell – there are technical solutions for many of these problems, but often the outcome no longer makes economic sense – neither for the individual user nor for a society.

Moore’s law and receding horizons

A key assumption of many forward projections for renewable energy production is that the technology will become cheaper and cheaper over time. Unfortunately, this isn’t true for many technologies, especially as fossil fuel inputs become more expensive.

One of the often cited rules in energy discussions is Moore’s law, which describes the fast advancement of capacity improvements (and price decreases) in computing power. It says that the density of calculation power can double every two years, and has been relatively consistently achieved since 1970. This has led to the fact that a smartphone today has more capacity than large mainframe computers in the early Seventies.

However, outside electronics, Moore’s law does not apply and has never applied for anything. A physical structure remains a physical structure, and does not have the multiplication potential that comes from miniaturization. We may be able to raise the efficiency for a photovoltaic panel from 18 to 20%, but not double it every two years no matter what we do, given the physical limits. The same is true for the materials used for its manufacturing; we might reduce them, but often by 10-20% and sometimes at the cost of more complex tools and purer materials (which also require energy). And erecting a modern wind turbine always requires steel, concrete and many advanced materials, which won’t change, no matter how much we optimize it.

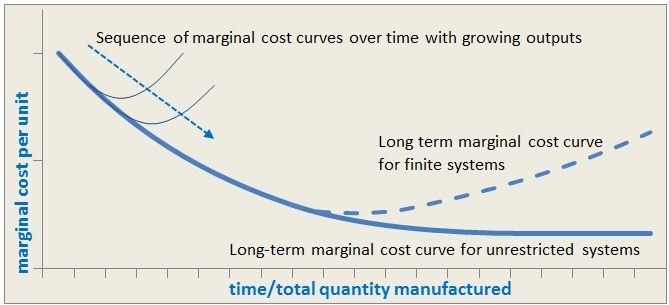

For normal industrial goods, price curves often show an asymptotic form. When a technology is new, neither its production nor its outputs are focused on efficiency; production facilities are small and processes involve a lot of manual labor. Also, new technologies often get produced in advanced economies with higher labor and energy cost. With maturing manufacturing technologies, more efficient and scaled up factories, and the inclusion of lower cost labor and energy from – for example – China, production becomes cheaper and prices fall. Eventually, when labor and production costs become optimized, the decline in price of the product slows, until it reaches a stable retail price more dependent on the raw materials and energy required to produce and transport the good.

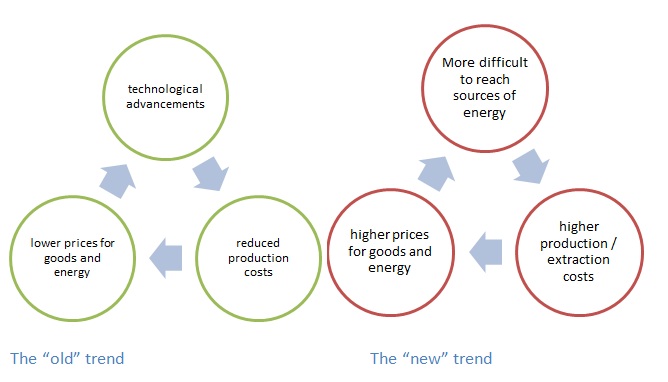

In many cases, the picture for raw materials and raw-material-driven products begins to look like the dotted line, despite rapidly growing output:

Figure 6 - Marginal cost curve for supply-constrained resources

During the past few years, we have seen this important reversal in this key underlying trend, which briefly visited our economies in 2008 when - with rising resource prices – everything from food to fuels became suddenly more expensive. Thanks to the economic crisis and reduced demand, this phenomenon has partially disappeared, but for some key commodities (such as copper, iron ore, coking coal and some others), we are already back to pre-crisis levels or higher. This is the “glass-half-full” trend, which applies to almost all natural resources, but first and foremost energy. Even if we – as many people correctly state – have enough of something in the ground, getting it out becomes more difficult, has to happen further away and in geopolitically riskier places etc..

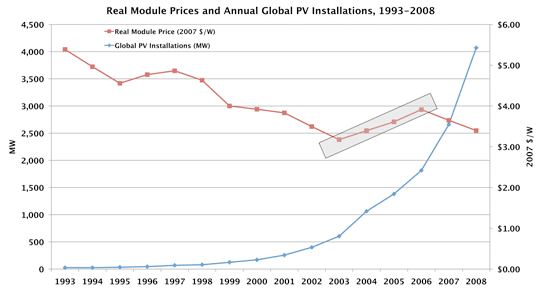

This is confirmed by the cost for new power plants, where cost estimates have recently gone up based on higher input cost (for almost everything ranging from nuclear to coal to wind towers), and even for solar panels, the permanent reductions experienced in the past haven’t continued between 2003 and 2008, despite rapidly growing production. The last important cost reduction happened since around 2006, when Chinese manufacturers entered the market, bringing low-cost production energy (mostly coal-based) into the game. Not truly a sustainable model. And, in 2009, due to overcapacity and massively reduced raw material prices, costs came down again, and there might even be more room for some reductions, but this story has an end once input prices go up.

Figure 7 - Cost of solar panels ((Pdf warning)

If that core trend of higher energy cost, particularly at the historically lowest-priced end, cannot be reversed, which we doubt it can, this has implications for everything that uses those inputs, as it raises the price with the cost of the raw materials and the energy that go into them. This effect might, in turn, effectively end the trend of lower and lower prices for everything, including energy generation technology, no matter what it is.

Figure 8 - The “old” trend ............. Figure 9 - The “new” trend

Base load power – a real problem

Except for solar and wind, most of the technologies currently seen as potential future output providers deliver base load power. This is true for biomass, for geothermal, for nuclear, and to a certain extent for coal. All those generation approaches have only limited load following capabilities, for very different reasons.

Now, stochastic renewable sources (mostly wind) coming into play, often with a “right of passage”, i.e. no limits in selling into the grid at a preferred price. Whoever comes next only gets to sell when there is still demand, and – in a free electricity market like we have it in most OECD countries – that means that prices for coal, nuclear and other base load outputs without a preferred status (biomass mostly has that status), drop sharply. Some analysts have even considered this a positive phenomenon, but actually it is not. What it really does: due to the preference of wind, it pushes marginal price (but not cost) of those steady sources down and thus makes base load generation economically unattractive, because less steady demand at lower prices simply translates to an unacceptable risk for investors. Spot markets are among the key reasons why no more nuclear and hardly any coal power plants were built in Western economies during the past decade.

In a future electricity system, we will see an increasing disparity between a growing pool of inflexible (for cost or technology reasons) base load power, a mission-critical pool of peak and cyclical load capacity, and that new, unpredictable pool of sources that deliver whenever they deliver, irrespective of demand.

A new electricity mix

If we use some currently available numbers for various electricity generation techniques, we might come up with the following for generation capacity in the United States, without any subsidies:

Table 4 – cost and suitability of various generation technologies

We are aware of the fact that the above numbers are being disputed, which is why we have included broad ranges. This is not the point we are trying to make – the point is incremental replacement of fossil fuel-based plants, especially cheap coal with more expensive technologies has the potential to lead to large increases in the price of electricity.

Now on top of the generation cost shown in Table 4, we have to bear the cost for maintaining and operating the electricity grid, which delivers the power to homes, offices and factories. For a standard grid today, which does not have to do much more than transmit electricity generated according to demand, this might add about 2-3 cents per kWh. When looking at the cost ranges above, it becomes quite obvious that even the lowest cost sources already bring the total price of electricity dangerously close to what industrial users can afford.

Now on top of the generation cost shown in Table 4, we have to bear the cost for maintaining and operating the electricity grid, of metering, and some profit margins for the utility companies which delivers the power to homes, offices and factories. For the U.S. today, where the grid does not have to do much more than transmit electricity generated according to demand, this adds between 2 and 7 cents per kWh.

Table 5 – approximate share of final electricity cost (multiple sources, IIER calculations)

When looking at the cost ranges, it becomes quite obvious that even the new lowest cost sources already bring the total price of electricity dangerously close to what industrial users can afford.

What really matters is “useful energy”

And now comes the challenge: Only power that meets someone’s demand has a positive price. If I am asleep and someone offers me free power to light my entire house like a Christmas tree, I don’t care. On the other hand, when the food in my freezer starts to thaw, I would probably be ready to pay a very high price for the few kWh it needs to keep that device going. The same is true in aggregate. Spot electricity prices go as low as 0-3 cents during the night (or even negative, http://www.scribd.com/doc/27816762/Negative-Prices-in-Electricity-Market), and up to 12, 15, sometimes even 50 cents at peak times during the day.

Now what we need to measure in order to understand the entire delivery system is not so much about the prices paid for one kWh of electricity produced, but instead the cost of electricity delivered according to demand. We want to determine how much it costs to provide a kWh from a particular source to supply our human energy demand patterns, and if that doesn’t work in a straightforward manner, we have to estimate the extra cost required to either shift it to the right time, or to shift demand to the time of production. Only once that has been factored in, do we know how expensive a kWh of electricity from a particular source really is.

Sources with little flexibility, such as coal and nuclear or run-of-river hydro plants, mostly produce around the clock. Given their low average cost, the average prices received are profitable, despite the fact that during the night they sell below full cost, but usually above marginal (fuel) cost. The rest (power plant investments, non-flexible operations cost) are incurred irrespective of plant outputs. Thus, adjusting output to more closely meet demand would incur even higher cost (or efficiency losses, or both), put stress on the equipment and require higher operations and maintenance efforts.

If we had to run our grids with just those base load sources, electricity would be more expensive, either from those efficiency losses, from lost overproduction during the night (to still meet peak demand), or from additional measures to shift demand, such as incentives and storage (either in the network or in end-user appliances, as described above). This would add to the basic generation cost. After including these extra efforts, electricity generated in coal or nuclear plants (see section below) would have to sell at a higher price than just the generation plus distribution cost.

Other sources, mostly dammed hydro, oil, and natural gas, are generally able to deliver exactly on time. (hydro only to a limited extent, as certain minimum flows need to be maintained in order to keep ecosystems in rivers below the dam intact). In general, we can turn them up when demand rises, and cut production back as soon as less power is needed. Those sources do not require extra cost on top of their generation cost and the basic effort to operate a grid. A kWh of electricity produced from natural gas thus usually costs approximately 6-10 cents (obviously as long as natural gas prices don’t change).

For sources that don’t have the characteristics described above, things become trickier. We wouldn’t be talking about smart grids, high voltage DC lines, storage in ELVs, and more, if it wasn’t for the fact that most of the sources we want to add to our grid are unpredictable beyond the reach of our weather forecasts. For sources that are capable of producing everything between 0% and 100% of total nameplate capacity at any given time, irrespective of demand, we need to have very different approaches to make them work, and none come cheaply.

So overall, as with all energy sources, we have limits in electricity cost to make it bearable for people. And not for us rich people who plan future energy systems, but also for everybody, and for those industries that manufacture the stuff we all use.

To be continued...

Next week, we will go through a list of all the currently available technologies for generation, transmission and storage, and review total feasibility and cost for each including transmission and grid management, and show certain trends for the future, and, ultimately, provide our assessment as to whether these technologies will be able to deliver what we need to keep grids going.

(link to following post: The Fake Fire Brigade Revisited #4 - Delivering Stable Electricity>

***************************

Previously in this series:

The Fake Fire Brigade - How We Cheat Ourselves about our Energy Future

Revisiting the Fake Fire Brigade - Part 1

Revisiting the Fake Fire Brigade - Part 2 - Biomass - A Panacea?

Thanks Hannes and Stephen and Nate!

One thing you didn't mention is Jevon's paradox in reverse. Jevon's paradox going forward says that as the price of a type of energy drops, you tend to use more and more of it. In reverse, as the price goes up, you tend to use less and less of it.

There is also something that you, Hannes, have pointed out earlier. The substitution of electricity for labor, which contributed to what looks like growing efficiency, can be expected to turn around and go backward as the price of electricity goes up. You may still of course get some technological improvements, but it seems like not too far down the road, you get an effect similar to what you show in Figures 8 and 9.

So higher electricity prices are truly a huge hurdle for a complex society that has learned to depend very much on electricity. Their existence is likely to send the world into a de-growth pattern that will be a real challenge for all the debt that is currently outstanding, and for our current method of financing projects.

The author's remarks in respect to manufacturing technologies reaching maturity and falling prices of the product reaching a bottom ,then beginning to rise again as materials and energy costs rise, is something that needs to be thoroughly discussed.

This general observation seems to imply the eventual end of throwaway society, for products ranging from drinking cups to automobiles.

Personally I have been considering buying some pv for a long time, but so far I haven't because the price has been declining so fast it has proven to be good strategy to delay the purchase.

It seems fairly obvious that barring tech breakthroughs involving new designs and new materials, just about every manufactured product will demonstrate a similar price bottom, followed by ever rising prices.

Any comment from persons knowledgeable about the expected actual purchase prices of pv panels and the associated inverters, low voltage dc appliances and so forth will be greatly appreciated.

My experience has been to use solar power in a somewhat specialized environment. My wife and I retired in 1998 to live full-time in an RV (a fifth-wheel trailer) that we sometimes move around in response to the local weather. Sometimes we are "on the grid" in an RV park; sometimes we are "off-grid" in the desert. I have not upgraded my solar system for a couple of years, but I keep an eye on the cost and availability of solar. Costs are not dropping very fast.

Soon after getting into solar (circa 2000) I found an interesting effect. I did not have enough power off-grid to stay in the desert more than a few days before my batteries were drained; I had more power usage than power supply. Upon a detailed investigation I found that the primary culprit was the 12-volt house lighting. Over half of our electrical consumption was in the incandescent and fluorescent fixtures. I had thought the big users would be the TV and computers we are addicted to, but research showed I was mistaken.

By 2005 I decided I had to have LED lighting if my solar system was to survive, and looking around found nothing suitable. Everything looked and acted like I had put flashlights in my ceiling. My background is software and electronics in Silicon Valley, so I felt confident I could invent a new way of using LEDs that would meet my needs. I began to experiment -- I was not as good as I thought.

Luckily, at the 2006 show in Quartzsite I came across Kelly, a young man out of Intel in Mesa, who had just developed a prototype product to replace one of the 12-volt DC bulbs I used in my rig. I was pleasantly surprised when he demonstrated that it was fully compatible with my incandescent bulb and produced more light -- and much, much less heat. We sat down that evening and designed a replacement for my other primary bulb, a 12-volt glass wedge bulb.

I became his first dealer of RV bulbs the next day, and began to sell LED bulb replacements to other RVers, and to use my rig as the test bed for new designs of LED lighting.

I now live in an LED environment, and Kelly and I continue to work together in LED designs and marketing, especially on how to take the totally acceptable solutions we have found for the 12-volt DC environment of an RV into homes and workplaces. Since we are pushing against the establishment and convention that 120VAC is the only way to power a home or office, it is slow, but there is progress.

My reason for telling this tale is to point out with my experience that solar by itself is not always a good solution. We must also find ways to reduce our needs for electricity so the size and expense of a solar system is not out of sight. LEDs are an important ingredient to making solar power really useful.

Sam Penny, the Prudent RVer

Thanks, Sam, for the realistic portrayal of what PV will get you. Too many still believe that solar technology is going to save us. Solar is not going to give us our current civilization in any way, shape, or form, as it is barely net.

PV is not barely net. Energy payback is less than 2 years southern Europe.

http://www.ecn.nl/docs/library/report/2009/m09034.pdf

I just installed a large array and allthough I'm lucky enough to receive subsidies (1-day per yaer with a 1:5 chance to get picked this year) I calculated that grid parity is allready reached for private households. The cost of generating solar electricity is about 20ct/kWh, while the consumer electricity price in my area is roughly 22ct/kWh. Not enough difference to invoke a floodwave of private installations, but there are ever more people in the Netherlands who now install solar without any incentives!

(About the price: If you install the system yourself, save the installation fees and shop for cheap components the price may be as low as 12ct/kWh!)

If EROI for renewables is high enough you can just build massive overcapacity to overcome the problem with erratic supply?

Sure, but it wouldn't be optimal to use that as your only strategy.

It's certainly a very useful component, though. See http://www.theoildrum.com/node/6910/713977

When are people going to grasp the concept that 'civilization' in the form that it exists today is simply *NOT* even worth trying to save. It is way past time to stop thinking in these terms.

Personally I think that it may well be possible to build a a completely new form of civilization in which, what we now call alternative energy sources, such as solar have a significant role to play.

BTW I haven't checked in here at The Oil Drum lately because I'm traveling in Europe, last week I happened to be in Germany where small scale residential solar energy systems, both hot water and photovoltaics are ubiquitous.

As most of you probably know by now because of a recent post here, that the German government at least, is very peak oil aware.

What will really cause TSHTF here in Europe, and soon, is that most transportation of goods here are done via truck.

With respect to that, the entire issue of solar energy is completely moot. So say good bye to the civilization that depended on oil and start thinking outside the box, folks. BAU is dead but solar is just being born.

Some of the new LED stuff is amazing, Probably a small but genuine fireman.

Howdy OFM,

We just saw our first increase in price for a PV panel in the last 2 years or so. The price now is less than half (price per watt, different panels, different manufacturer, different quality) of what it was a few years back. This was just one make of panel, and it only went up a few cents, but it was an increase.

The least costly panels (including the one that just got a little more expensive) are selling for almost 1/2 what the 'top-quality' ones are.

Inverter prices are still going down.

Expectations? Who knows! Prices will go way down if the economy collapses but no one will be able to afford to buy anything....

Hi Gail,

I confess I'm deeply puzzled by this statement. I cannot for the life of me imagine replacing an industrial motor with human labour and expecting an equal amount of work to be done at a lower cost. In most cases, it would be physically impossible to do this work manually, and even if the task were to fall within the physical means of the individual, the economics make no sense whatsoever. For example, at what cost would dairy farmers abandon their milking machines in favour of hand milking, or the garment trade replace their electric sewing machines with ones operated by foot pedals? $1.00 per kWh? $10.00 per kWh? $100.00 per kWh? [A commercial electric sewing machine can operate at five thousand stitches per minute and when equipped with a one-quarter HP motor would perform 1.6 million stitches per kWh.]

Cheers,

Paul

Edit:

Hannes asked me to take out the links to his presentations from a year ago, since they have been updated.

We will have to ask Hannes for his current views.

Thanks, Gail, for providing links to these papers; much appreciated. I've only glanced at the section on milking so perhaps this is explained elsewhere and I've missed it to my great embarrassment, but I don't understand the difference in labour rates if you're pitting one scenario against another. In the case of manual milking, the cost per hour of labour input is set at $3.00 and under the semi-automated and automated scenarios it's fixed at $4.56 and $15.00 respectively. What accounts for the difference?

In any event, I'm still trying to gauge the impact of higher electricity prices on current and future investments, so to go back to my original question: at what point does it make sense for a dairy farmer to abandon these milking machines and revert back to using a bucket and stool?

Cheers,

Paul

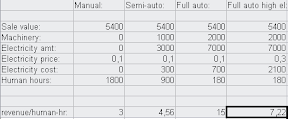

Hall&Kunz's milking example is just too interesting not to comment on.

I think it was built up by

- assuming the (sale) value of the milk constant at 5400 $/yr

- assigning the left-over revenue after paying for machinery and electricity as the value of the human input, divide by hours to get the pay-off per human work hour.

(a simple spreadsheet with these assumptions reproduce the results:

)

Varying only the price of electricity, the "full auto" scenario gives the same payoff of 3$ per human-hour as the "manual" scenario when the el. price is 41 cents.

If the price of el. is tripled to 0,30$/kWh, the payoff per h-hr is 7,22$, more than twice that of manual and more than 50% better than semi-automatic.

----

A couple reflections around this:

The customary econospeak for this kind of development is "increasing labour productivity". And for many purpouses that's an entirely valid way of looking at it, but for our purposes -- figuring out the link between economy and energy -- I think it is misleading.

In the first step, we're adding ability to use extrasomatic energy; in the second step, we expand it. The "marginal cost" in e.s. e. of displacing labour increases; the semi-auto stage uses 3000 kWh to replace 900 h-hrs, for a cost of 3,33 kWh/h-hr, while the full-auto stage replaces an additional 720 h-hrs at a cost of 4000 extra kWh, for 5,55 kWh/h-hr replaced. However, the increased wealth effect of going full auto is dramatic, even if you triple the price per kWh! So as long as the price of electricity stays below the break-even point, we're likely to see the trend of increased automatization continue. It will add less wealth than before, but the trend still has a goodly ways to go before it starts adding negative wealth.

Another way of looking at it is that improved technology increases the value of electricity. (Above and apart from the familiar Jevons' "paradox", that increased efficiency increases value; here, "efficiency" is actually falling). Then we have two possible mechanisms that could explain why el. prices are increasing: One, that the (marginal) cost of producing it is increasing; two, that the value of electricity is increasing. Of course, both effects could be (and probably are) in play at the same time.

The high prices of electricity in Germany and Denmark are mentioned several places in this thread. I submit that since they are very advanced, the value of electricity is very high there, thus the price may be bid up without it necessarily reflecting increasing cost of production.

Thanks, KODE. I was sorry I hadn't read the papers more thoroughly before the links were removed as I was having difficulty understanding what was being said (and I fault myself for that more so than the authors).

Cheers,

Paul

Paul, I think there is a middle ground in this issue, where the original assertion, of labour replacing electricity, is correct.

It is obvious that human power cannot replace electric power for motive applications, even a battery electric forklift, which uses very little electricity, is worth many workers in terms of what if can lift and move - I doubt those will be replaced.

But where is see the tipping point is in complex, customised automated machinery (the milking machine is an example where the machinery has become standardised, because all cows are essentially the same) that is replacing the dexterity, not power, of people.

Consider two furniture making workshops, one large and one small. The large one (say an IKEA supplier) will have complex equipment to handle almost everything - pnuematic lifters for boards, rolling lines to move all the materials, automatic gatherer/stacking machines etc etc. This automation equipment does not use that much electricity, it is just very expensive to buy, and lends itself to doing one thing, very efficiently, so you end up with specialised factories making lots of one or two things. And, in the IKEA example, shipping those one or two things around the world.

Now, in the smaller workshop, they will still use electricity for their saws etc, maybe even a CNC cutting machine, but instead of the pnuematic handling equipment, it is far cheaper to employ a person to do it, especially if you are making numerous different things, and want to be able to introduce new products without re-tooling.

As energy costs (both oil and electricity) increase local production gains an advantage from reduced transport, and the local market is for smaller numbers of many things, so the places that can produce smaller volumes, efficiently, and multiple products, will do better.

A workshop today in, say sunny Halifax, might be considering whether to specialise in one thing, and export everywhere, or make multiple things sold locally. Which is the more risky decision? Yes, you can produce the one model chair, and take your chances that it will sell everywhere, not suddenly go out of style etc, and have a massive investment in automation to bring the unit cost down, but only at high volume, and high exposure to (increasing) transport costs Go the other way, make the chair, tables etc to sell locally, and you have more flexibility to change you product mix, bring in new products, and not be at the risk of transport costs. BUT, you can't become a mega factory, either.

In the car business, automation clearly won out, but at a cost of inertia - re-tooling for new models comes at a massive cost, and companies can find that, if the car is not a hot seller, that by the time the tooling is paid off, the model is obsolete!

Which is the better model in an energy and resource constrained world? As a factory owner, would you be willing a large up front investment in equipment that reduces your flexibility, and requires large production volume to be profitable, in a (potentially) shrinking market for whatever you produce?

Down the road from me is a family owned sawmill, that mills western red cedar (and sends some of it to the poor folks on the East coast who can't grow this beautiful wood). Their line is semi automated, it automatically cuts the logs to get the most large sized pieces possible, but they all come out on the same line, where a bunch of workers pick them and stack them onto separate stacks, according to size, and length. To replace these people with automation can be done, but is only worthwhile if the mill is prepared to have a huge increase in volume (which would exhaust the local cedar supply).

There will always be a place for machinery, but, especially if there is a shift towards more localised production, I think there will be more (not all) cases in the future, where it is better to have people operating the machines than machines to operate the machines.

Perhaps, but the kW involved here, are so tiny, as to be totally insignificant.

The impact here, is much better measured in employment, not kW;

Any Power effect is way below the noise floor.

The energy required to make all the extra robots is the main energy cost, not the energy the robots use themselves.

100,000 Transistors Now Cost Less Than a Grain of Rice

As energy costs (both oil and electricity) increase local production gains an advantage from reduced transport

Only a very small one. Water and rail use relatively little energy, and both can be electrified. Electricity costs won't increase enough to change the dynamics of long-distance trade.

See http://energyfaq.blogspot.com/2008/09/can-shipping-survive-peak-oil.html

So can trucking, at least for short distances (and never mind what T. Boone Pickens might say about whether a battery can move a truck):

http://www.youtube.com/watch?v=0f1AlrG8gVU

Of course, that still leaves a need for electric freight rail lines for longer-distance hauling, and probably means less long-hauling of goods in general, but...I'm OK with that.

I'll be disappointed if I don't see electric trucks coming to pick up my trash in the next few years (assuming we don't have a serious SHTF scenario before then). Ideal for that kind of short-range, fleet-based trucking...

The UK has had milk delivered by battery powered vehicles for decades,. It is about time the concept spread.

NAOM

So can trucking, at least for short distances (and never mind what T. Boone Pickens might say about whether a battery can move a truck):

http://www.youtube.com/watch?v=0f1AlrG8gVU

Of course, that still leaves a need for electric freight rail lines for longer-distance hauling, and probably means less long-hauling of goods in general, but...I'm OK with that.

I'll be disappointed if I don't see electric trucks coming to pick up my trash in the next few years (assuming we don't have a serious SHTF scenario before then). Ideal for that kind of short-range, fleet-based trucking...

One detail to keep sight of, is in the move from Truck to Rail, you cannot eliminate trucking; Rail lines clearly do not go to Supermarkets, factories etc.

So you target long haul truck freight, but only down to some mileage ceiling.

The bulk freight is likely already going by rail.

I also note, only 13% of truck freight shows as Container, in one USA study, which is the most-flip-able to rail.

You gain a fuel/tonne-km, but lose with multiple handling, typical empty trips, double-back effects... {and then there is that old chestnut quaintly called 'shrinkage'}.

I guess you have to hope, the tail-extension-time that buys, is enough to convert the local-truck fleets to alternatives, but that will be very costly.

Perhaps it needs something like a Solar-Topped Container, with Electric base, that can give traction to either Rail, or road trailer bogeys ?

Hmm - co-operation between Rail and Road ?

Best Hopes for Trolley freight,

Alan

Hi Paul,

I think the fundamental decision in this case is whether the initial capital investment in the required hardware (i.e., the purchase of machine "X") is warranted versus the continued use of manual labour. I would not expect the cost of the electricity consumed by this piece of machinery to be a significant factor in the decision making process. So my argument, very simply, is that higher electricity prices will not cause a return to manual labour, in and of itself, because manual labour will never be cost-competitive with electricity.

Cheers,

Paul

Paul, I think you are considering direct BTU for BTU comparison. Often we have the capable of using a much less energy intensive process, that requires some (or more the alternative) human labour to support. An example, in my home I have a spin dryer, which centrifugally extracts water from clothes. Using it total dryer energy input needed is reduced by 50-75%, but it requires an extra human loading/unloading step. The same thing applies if we replace a car ride with a bicycle ride. The bicyclist is not consuming a hundred horsepower in order to move, he is using much less energy, but the total human time/effort spent goes up. So often times it is using human brainpower or manual flexibility to create a process that is more efficient in terms of energy comsumption.

Hi EoS,

I'm looking at this in a very broad sense. If I understand Gail's position correctly, it's that higher electricity prices will result in the substitution of human labour for motive power ["The substitution of electricity for labor, which contributed to what looks like growing efficiency, can be expected to turn around and go backward as the price of electricity goes up."]. If anything, I would expect higher energy prices to further squeeze out labour as employee wages and benefits represent a larger portion of total overhead and there are generally greater opportunities to whittle that back through additional automation and improved process.

A semi-skilled labourer might be paid $10.00 per hour, say, and with various benefits and administrative overhead the actual number could be $15.00 or more; for our purposes, we'll assume the annual cost of that 2,000 hours of labour is $30,000.00. At an average cost of 10-cents per kWh, that one $30,000.00 employee effectively buys us 300,000 kWh/year of motive power or 150 kWh per hour at 2,000 hours/year, which translates to be 200 continuous HP. Anyway you look at it, that's a lot of motive power at a very reasonable cost.

Cheers,

Paul

It seems to me that one of the issues is that these things do not exist in isolation from the rest of the world. So, you cannot simply change electricity price and compare it to labor costs as if those costs were unchanging.

As electricity costs rise, they will crowd out other spending. The result of this will be that other areas will suffer economically. There is every possibility that you could see high structural unemployment which would accelerate what is basically an existing trend in terms of the downward pressure on labor pricing power. So you might be looking at labor willing to work at a fraction of the current rates because they need to work to eat.

Also, I believe you asked the question of when would the farmer switch from electric milking machines to human milkers. This would depend on individual farm finances of course. However, in a larger context, if electricity prices rise (along with other energy prices) the crowding out of other spending (health, food, feed, transportation fuel, etc.) and/or margins would eventually lead to the consideration of other alternatives. For some this might be the installation and maintenance of some kind of local energy system(s) (solar, wind, geothermal, hydro). However, not all farms would have sufficient renewable resources to provide adequate energy volume or to meed the needs required by a time-sensitive task like milking. In an environment of potentially increasingly available cheap labor, an obvious possibility would be to consider utilizing farm workers for milking. A likely outcome might be that large dairy farms would become impractical and that smaller-scale, local farming would eventually replace it. Whether that change would allow for existing overall production to be equaled or increased is a legitimate question. Personally, I suspect not. I suspect that overall production would be reduced as it became increasingly expensive/difficult to support the milking of the current dairy stock.

Note that this does not even get into the demand-side effects of consumers paying higher energy costs, of farmers raising prices to pass their energy costs (in part or full) down the chain, or of the crowding out of dairy demand as the prices rise. Less demand would have the impact of putting downward price pressure on the end product, squeezing margins further.

As you can see, I think that higher electricity prices could have significant economic consequences (most not real good from a BAU point of view). One of these consequences could well be (but by no means must be) the replacement of electrically powered work with human powered work.

Brian

Hi Brian,

When businesses get squeezed, it's normally the employees who feel the pinch first in terms of reduced hours and layoffs, not the motors. Wages may fall precariously, but I can't imagine they could ever fall to the point where motive power would be uncompetitive with human toil.

An individual in good physical health could sustain about 1/10th of a horsepower of manual effort, although not likely for eight continuous hours. Let's say that this individual is paid $1.00 per hour for his or her efforts, so our daily wage is $8.00. A 1/10th horsepower motor would draw approximately 75-watts, thus consuming some 0.6 kWh over this same work day. All else being equal, I would have to pay in excess of $13.00 per kWh before the services of this $1.00 per hour wage earner would be cost competitive.

Cheers,

Paul

Again, I think your barking up the wrong tree. Humans, or even animal power can't compete with our machines. I think that is true even if you have to grow plants and turn them into biofuel. So any substitution that happens will be for those cases where a little bit of human labour saves a whole lot of electricity (or fuel). Say a factory manager has a choice of two motors, one is more efficient, but requires monthly preventive maintenence. If power is cheap relative to labour he would choose the energy hog. In the reverse case, it would be a net cost savings to pay someone to do the preventative maintenence and pay lower power bills. This guy is not doing hard labour, generating lots of BTUs, he is just using brains plus dexterity to enable a more energy efficient method of production to be used. I think it is in these sorts of situations where the tradeoffs will occur.

That is kinda like, with high electricity costs, it is worthwhile hiring yourself to make changes in a business. If power was too cheap to meter, you'd be out of work.

I agree that the adoption of more efficient equipment will be driven by higher electricity prices, however, that's not the point of contention; rather, it's the claim that electric motors will be replaced by human labour because the latter will become more cost competitive as electricity prices rise.

Cheers,

Paul

Hi,Paul

I'm not sure whether Gail meant substiting labor for electric motors only or in a more general sense substiting labor for electricity in other uses including motors.

There are ways to get by with less electricity by using more labor that can save a great deal of electricity, although perhaps not enough in most cases to be considered "economic".

I could dig a ditch around a hillside to get a gravity powered flow of water to part of our farm, but it is far easier to run a pump and pump the same water uphill from a point farther down the stream.

I don't save enough in electricity to justify the labor but I line dry most of our laundry anyway.

Ditto the electric range and the hot water heater-we cook some in cold weather on a wood fired stove, since we need the heat anyway,and we heat some water for washing dishes and minor cleaning on the wood stove too.The savings are real and significant -to us-but they are not enough to be bothered with the wood range except in cold weather when we get a "twoferone".

A local furniture manufacturer in recent times has started buying lumber father in advance of need than formerly,and going to some extra expense to store the lumber in a drying yard so as to reduce the time the wood needs in the drykilns.

As I understand it, this is saving a considerable amount of electricity- enough to cover the additional expense of handling the lumber twice plus letting it sit with money tied up .

The kilns are heated with steam generated by burning the wood scraps and sawdust produced in the plant, but the four plant boilers have some five or six motors over two hundred horse power total on each boiler, plus the kilns have numerous powerful electrically driven fans to circulate the hot air.

With rising electricity costs and stagnant wages, it seems likely that numerous businesses will eventually find ways to substitute at least some labor for some electricity.

Hard up homemakers will find lots of ways to substitue labor for electricity.

I'm not too awfully hard up, but I have invested almost a grand and lots of spare minutes this summer building a solar domestic hot water system-I expect it to save up to two hundred kilowatt hours per month starting next week.

We have conserved half or more of our hot water needs since June by bathing almost every day in our swimming pool, which has been TOASTY warm all summer;I refill it with gravity fed spring water every couple of weeks, no chemicals needed..

This is a VERY pleasant way to wind up a sweaty day outside. ;)

Hi Mac,

That's a fair question and one best answered by Gail, herself. However, I should have made clear that this is author's position as summarized by Gail. For clarity, the full paragraph reads as follows:

My apologies to Gail and the other participants in this conversation for my muddled wording.

Cheers,

Paul

1/10 hp perhaps bicycling, but most people cannot do this for hours at a time. Food intake would go up by far more than savings.

Muscle power 4 to 5% efficient, electrical generation converted back to work with motor 30%.

Food takes 10 k cal fossil fuel to produce 1 k cal food. Electricity takes 3 k cal to produce 1 k cal work.

The first Newcomen steam engine was rated 80 hp but replaced a team of 500 horses.

It's all in the mitochondria, If we can genetically pack an extra 10x into the muscle cells we can do 10 times the workload. ATP ye canna beat it.

"Food takes 10 k cal fossil fuel to produce 1 k cal food."

True of industrial ag, but obviously not of all ag.

Haven't seen some of those Bulgarian weight lifters have you? But that's really my point; even if you were to find someone who possesses tremendous strength and stamina, their best efforts can be outshadowed by as little as 0.6 kWh of electricity.

Also, to be fair, I wrote: "An individual in good physical health could sustain about 1/10th of a horsepower of manual effort, although not likely for eight continuous hours".

Cheers,

Paul

not the motors. Wages may fall precariously, but I can't imagine they could ever fall to the point where motive power would be uncompetitive with human toil.

Interesting that - A tale told on TOD (or some other peak oil site) was of some metal workers and how it was cheaper to send some big container making to Russia during the collapse of their economy where the containers could be assembled and hand ground (with the sub 2kwh hand grinders) than to have machine made in the US of A.

I've pitched how a $500 150 solar panel is like having a slave who can provide a man's labor every day while the sun shines for 20 years.

Hi Eric,

I'd be curious to learn more. Was the American plant fully automated and the cost of electricity was the sole determining factor in this decision to ship production overseas or were there other considerations as well? Let's assume it takes 500 kWh of electricity to assemble a single shipping container (250 kW over two hours, say) -- at 10-cents per kWh, that cost is just $50.00. [I don't know what would be a reasonable number, but my hunch is that the time required to stamp and robot weld a shipping container can be measured in minutes as opposed to hours and that 250 kW is on the high side.]

Cheers,

Paul

You are asking me to outdo my search-fu and track down an offhand comment made over the course of 5 years that I happen to remember as it was an extreme example. I'm good at pointing to wierd-arsed things.......but not THAT good.

Hopefully by mentioning it, someone else will have details and be able to expand on the matter. If not - file it as an 'myth' and move on.

Not good enough, Eric. I need names, dates, places, ring sizes and ring tones and I need it now !

All kidding aside, whatever the real reasons may be, I suspect the cost of electricity wasn't the primary factor and probably not even a serious consideration. With a few obvious exceptions, in most cases electricity is such a small percentage of the overall budget that as another commentary here described it, it's mostly background noise.

Cheers,

Paul

And I'm happy to dig it up...when I can find the claim. Usually I remember enough of the details so I can ask the search engines to find the claim.

I'm sorry I failed you.

(takes out spork)

(starts stabbing chest)

And when you've finished with that spork, please take this whip and engage in a little further self flagellation. ;-)

If you can find something to pass along, it would be appreciated; if not, no sweat.

Cheers,

Paul

It seems to me that human labor wins when electricity is not available, rather than when it goes up in cost.

That might be true, JB, but I expect we'll do our best to keep the lights on regardless of the cost.

Cheers,

Paul

As electricity costs rise, they will crowd out other spending.

An efficient household can get down to 500 kWh/year and person. At $0.20 per kWh that's $8.3 per month.

So paying thousands of dollars for rent, health-care and income taxes is no problem, but woe betide us if those 8 bucks were to increase...

I'd say this scenario may be more likely than non-affordable electricity bills in a developed economy/society:

C'mon.

I picked a refrigerator at random and it uses more than 500KWH/year. And it is modern energy star one.

http://www.lowes.com/Attachment/energyguides/883049151021.pdf

In addition to having my food not spoil, I'd like to have some light, a washing machine, a computer, a TV. I'm not asking for much here.

500KWH/month is OK for a typical house.

This GE 18.2 cubic foot refrigerator uses 335 kWh/year.

http://www.homedepot.com/webapp/wcs/stores/servlet/ProductDisplay?storeI...

http://www.homedepot.com/catalog/pdfImages/8b/8b9da207-be44-48f2-bb07-e8...

Buy a MacMini computer (now 10 watts, mine is 14 watts) plus an efficient LED screen (say <35 watts actual that doubles as a small screen TV, my solution). CFL lights, perhaps coupled with motion sensors (my night lights are 0.7 watt LED motion sensors).

A Bosch Vision washing machine uses almost no electricity (yellow & black sticker is $9/year for 8 loads/week with natural gas hot water, but that $9 is for electricity and gas). Wash in cold water (I do) and use a solar clothes dryer.

"Energy Star" is just middling efficiency. VERY far from the best !

Best Hopes for Energy Efficiency,

Alan

I consider ourselves pretty thrifty and thats about as far down as we got. Double that is more typical.

While we live in a modest Apartment, we're in the mid 300 KWH's a month, with a typical array of appliances, Electric Stove, Dishwasher, Fridge, Freezer, Wash/Dry.

I think we'd all really have to look at which of our standard electrical appliances today are quite capable of being met with other means. ClothesDrying on a line is clearly one we've been able to demur for some decades now.. but when the 'Acceptable Utility Rate' starts showing the effects of PO, as I fear it must (if 'Energy' is as fungible as I suspect it is), then I also predict that we will be far less likely to use it for 'bulk energy' as we do now with Water Heating and Clothes Drying, etc.. and the average household KWH numbers will push downwards.

There are some extremely High-Value uses for electricity, lighting and communications in particular, which would do much of the lifting required to retain the 'Advances' of contemporary life with a very reasonable fraction of the overall watt/hours, while even refrigeration, and certainly heating can be considerably restructured to stop taking such bulk volumes of Electrical Supply for granted, while maintaining many of our current assets.